- Execute Command Activity In Datastage

- Different Stages In Datastage

- Execute Command Activity Stage In Datastage

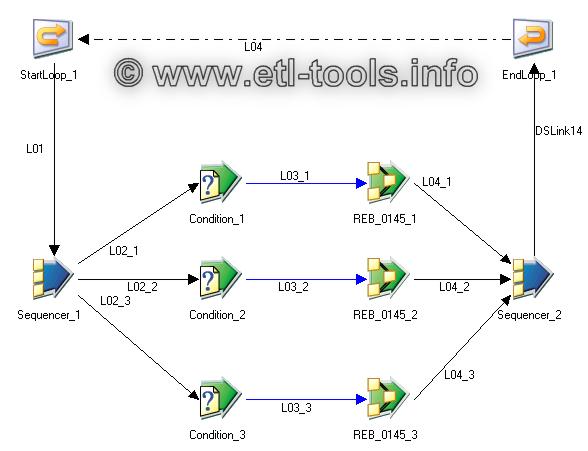

Use a Sequence job to process a list of files. Example – Delete all the datasets in a folder. All the files that meet the pattern ‘.ds’ in a folder must be removed using the orchadmin command. The folder name is a parameter to the sequence. Step 1 Generate the list of files. Add an Execute Command Stage to generate a comma-separated list of files with this command line. Without doing this, your UNIX commands won’t run on the command prompt. After you have done this then you can use any Datastage command for interacting with the server. The main command you can use is the ‘dsjob’ command which is not used only to run jobs but for a wide variety of reasons. Command Stage is an active stage that can execute various external commands, including server engine commands, programs, and jobs from anywhere in the IBM® InfoSphere® DataStage® data flow. Command Stage is only supported on Windows.

How to Run Datastage Job from Unix Command Line:

Most Data warehousing project requires that your job runs in batches at specified time slots. In such cases the Datastage jobs are usually scheduled by using an external scheduling tool like ESP Scheduler, Control M, Autosys, etc…

This is made possible by writing scripts that will run your jobs through the command line. The command line is very powerful interface to Datastage which lets us do more than just run the normal job. The guides present in the Datastage documentation will be very helpful in exploring the various things that can be done through the command line.

However this gives the basics you will need to need to carry out your execution

In UNIX, the Datastage home directory location will always be specified in the “.dshome” file which will be present in the root directory. Before you can run your Datastage commands you will have to run the following commands

§ cd `cat /.dshome`

This will change the location to the home directory. By default this will be /opt/IBM/InformationServer/Server/DSEngine

§ . ./dsenv > /dev/null 2>&1

This will run the dsenv file which contains all the environment variables. Without doing this, your UNIX commands won’t run on the command prompt.

After you have done this then you can use any Datastage command for interacting with the server. The main command

To run a job:

Using the dsjob command you can start,stop,reset or run the job in validation mode.

dsjob –run –mode VALIDATE/RESET/RESTART project_name job_name

This command will actually run the job in validation mode. Similarly you can use RESET or RESTART instead of VALIDATE depending on what type of run you want.

If you want a normal run then you will not need to specify the –mode keyword as shown below

dsjob –run project_name job_name | job_name.invocationid

Running with the invocationid would mean that the job would be run with that specific invocation id

Now if you have parameters to set or paratemeterset values to set then this can also be as set as shown below

dsjob –run –param variable_name=”VALUE” –param psParameterSet=”vsValueSet” project_name job_name

To stop a job:

Stopping a job is fairly simple. You might not actually require it but still its worth to take a look. It acts the same way as you would stop a running job the Datastage director.

dsjob –stop project_name job_name|job_name.invocationid

To list projects, jobs, stages in jobs, links in jobs, parameters in jobs and invocations of jobs.

dsjob command can very easily give you all the above based on the different keywords. It will be useful for you if you want to get a report of what’s being used in what project and things like that

The various commands are shown below

‘dsjob –lprojects’

will give you a list of all the projects on the server

‘dsjob –ljobs project_name’

will give you a list of jobs in a particular project

‘dsjobs –lstages project_name job_name’

will give you a list of all the stages used in your job. Replacing –lstage with –links will give you a list of all the links in your job. Using –lparams will give you a list of all the parameters used in your job. Using –linvocations will give you a list of all the invocations of your multiple instance job.

To generate reports of a job

You can get the basic information of a job buy using the ‘jobinfo’ option as shown below

dsjob -jobinfo project_name job_name

Running this command will give you a short report of your job which includes The current status of the job, the name of any controlling job for the job, the date and time when the job started , the wave number of the last or current run (internal InfoSphere Datastage reference number) and the user status

You can get a more detailed report using the below command

dsjob -report project job_name BASIC|DETAIL|XML

BASIC means that your report will contain very basic information like start/end time of the job , time elapsed and the current status of the job. DETAIL as the name indicates will give you a very detailed report on the job down to the stages and link level. XML would give you an XML report which is also a detailed report in an XML format.

To access logs:

You can use the below command to get the list of latest 5 fatal errors from the log of the job that was just run

dsjob -logsum –type FATAL –max 5 project_name job_name

You can get different types of information based on the keyword you specify for –type. Full list of allowable types are available in the help guide for reference.

There are a number of other options also available to get different log information. You can explore this in more detail in the developer guide. With the Datastage commands you can administer jobs, run jobs, maintain jobs, handle errors, prepare meaningful job logs and even prepare reports. The possibilities are endless. If you like to code then you won’t mind spending your time exploring the command line options available.

Most Data warehousing project requires that your job runs in batches at specified time slots. In such cases the Datastage jobs are usually scheduled by using an external scheduling tool like ESP Scheduler, Control M, Autosys, etc…

This is made possible by writing scripts that will run your jobs through the command line. The command line is very powerful interface to Datastage which lets us do more than just run the normal job. The guides present in the Datastage documentation will be very helpful in exploring the various things that can be done through the command line.

However this gives the basics you will need to need to carry out your execution

In UNIX, the Datastage home directory location will always be specified in the “.dshome” file which will be present in the root directory. Before you can run your Datastage commands you will have to run the following commands

§ cd `cat /.dshome`

This will change the location to the home directory. By default this will be /opt/IBM/InformationServer/Server/DSEngine

§ . ./dsenv > /dev/null 2>&1

This will run the dsenv file which contains all the environment variables. Without doing this, your UNIX commands won’t run on the command prompt.

After you have done this then you can use any Datastage command for interacting with the server. The main command

To run a job:

Using the dsjob command you can start,stop,reset or run the job in validation mode.

dsjob –run –mode VALIDATE/RESET/RESTART project_name job_name

This command will actually run the job in validation mode. Similarly you can use RESET or RESTART instead of VALIDATE depending on what type of run you want.

If you want a normal run then you will not need to specify the –mode keyword as shown below

dsjob –run project_name job_name | job_name.invocationid

Running with the invocationid would mean that the job would be run with that specific invocation id

Now if you have parameters to set or paratemeterset values to set then this can also be as set as shown below

dsjob –run –param variable_name=”VALUE” –param psParameterSet=”vsValueSet” project_name job_name

To stop a job:

Stopping a job is fairly simple. You might not actually require it but still its worth to take a look. It acts the same way as you would stop a running job the Datastage director.

dsjob –stop project_name job_name|job_name.invocationid

To list projects, jobs, stages in jobs, links in jobs, parameters in jobs and invocations of jobs.

dsjob command can very easily give you all the above based on the different keywords. It will be useful for you if you want to get a report of what’s being used in what project and things like that

The various commands are shown below

‘dsjob –lprojects’

will give you a list of all the projects on the server

‘dsjob –ljobs project_name’

will give you a list of jobs in a particular project

‘dsjobs –lstages project_name job_name’

will give you a list of all the stages used in your job. Replacing –lstage with –links will give you a list of all the links in your job. Using –lparams will give you a list of all the parameters used in your job. Using –linvocations will give you a list of all the invocations of your multiple instance job.

To generate reports of a job

You can get the basic information of a job buy using the ‘jobinfo’ option as shown below

dsjob -jobinfo project_name job_name

Running this command will give you a short report of your job which includes The current status of the job, the name of any controlling job for the job, the date and time when the job started , the wave number of the last or current run (internal InfoSphere Datastage reference number) and the user status

You can get a more detailed report using the below command

dsjob -report project job_name BASIC|DETAIL|XML

BASIC means that your report will contain very basic information like start/end time of the job , time elapsed and the current status of the job. DETAIL as the name indicates will give you a very detailed report on the job down to the stages and link level. XML would give you an XML report which is also a detailed report in an XML format.

To access logs:

You can use the below command to get the list of latest 5 fatal errors from the log of the job that was just run

dsjob -logsum –type FATAL –max 5 project_name job_name

You can get different types of information based on the keyword you specify for –type. Full list of allowable types are available in the help guide for reference.

There are a number of other options also available to get different log information. You can explore this in more detail in the developer guide. With the Datastage commands you can administer jobs, run jobs, maintain jobs, handle errors, prepare meaningful job logs and even prepare reports. The possibilities are endless. If you like to code then you won’t mind spending your time exploring the command line options available.

There have always been requirements in which you would need to run certain unix commands or shell scripts within Datastage. Although not a popular demand, its still something that can lie in the ‘good to know’ category. There are a number of different ways you can actually do this. I will try and explain the methods I have tried out.

- After you have done this then you can use any Datastage command for interacting with the server. The main command To run a job: Using the dsjob command you can start,stop,reset or run the job in validation mode. Dsjob –run –mode VALIDATE/RESET/RESTART projectname jobname This command will actually run the job in validation mode.

- How to execute unix commands in Datasatge. Is there any other option to execute the unix commands directly in datastage. Touch #path#samplefile.txt - Its not working in Execute command stage Can you please suggest how to use the parameter in execute command stage.

Specifying the shell script in job properties

If you have any pre or post processing requirements that have to be run along with the job and if you have done this via a shell script, then you can specify the script in the After/Before job abort routine section , with its parameters. You should first select the ‘execsh ‘option from the drop down list to indicate that you are going to run the script.

Using the External filter stage

The external filter stage allows us to run UNIX filters during the execution of your job. Filters are programs that you would have normally used during your UNIX career. Filter programs are programs that read data from the input stream and modify it and send it on to the output stream. A filter that everyone is bound to have used is ‘grep’. Other common filters are cut, cat, grep, head, sort, uniq, perl, sh, wc and tail. The external filter stage allows us to run these commands during processing the data in the job. For eg. You can use the grep command to filter the incoming data. Shown below is a simple design.

As per the command we are filtering out data having the number 18 in it, using the grep command.

Using the sequential file stage

Although not a frequently used option, the sequential file stage does allow us to run unix filter commands inside it. In this example I have written a shell script that can be called inside the stage. The place where you have to enter the command is shown below.

The shell script written was a straight forward one that would read from the input directly

Execute Command Activity In Datastage

#————Shell script————————-#

#!/bin/bash

while read data; do

echo “$data, Modified by emerson” >> E:/Sample/sample.txt

#– You can add your processing logic here—–#

Different Stages In Datastage

done

#———–End of script ————————-#

Execute Command Activity Stage In Datastage

The output would be as below.